Keyword Censorship in Chinese Mobile Games

How are applications and social media controlled in China? Who decides what content is censored and how are these controls implemented? Censorship in China is often portrayed as a centralized top-down system of control directly managed by the government. But peering inside this system reveals a more fragmented and decentralized regime of control than is popularly understood.

Censorship of apps and social media in China is operated through a system of intermediary liability or “self-discipline” in which companies are held responsible for content on their platforms. Failure to comply with content regulations can lead to fines or revocations of business licenses. Citizen Lab researchers Jeffrey Knockel, Lotus Ruan, and Masashi Crete-Nishihata have recently opened a view into this system from an unlikely source: games.

With an estimated value of over 27.5 billion US$ in 2017, China represents the largest gaming market in the world. Lucrative as it is, China’s market presents unique challenges to companies due to its strict regulatory environment. The gaming industry must follow content control policies including submitting lists of blacklisted keywords to regulators.

In a paper presented this week at the 7th USENIX Workshop Free and Open Communications on the Internet (FOCI), the researchers demonstrated how keyword-based censorship operates in mobile games. The paper, Measuring Decentralization of Chinese Keyword Censorship via Mobile Games, analyzed over 200 games and over 180,000 blacklisted keywords, testing various theories that would help to better explain the role of companies and government authorities in the implementation of censorship.

It is well documented that government authorities in China issue directives to media and Internet companies on how to handle certain sensitive content. Blacklisting specific keywords to block content like chat messages or online posts that contain prohibited content is one way that social media companies carry out censorship. But research on blacklisted keywords found in various social media platforms (e.g, live streaming, chat apps, and microblogs) in China has revealed limited overlap between keyword lists across companies, suggesting that authorities do not provide a centralized list of keywords to companies.

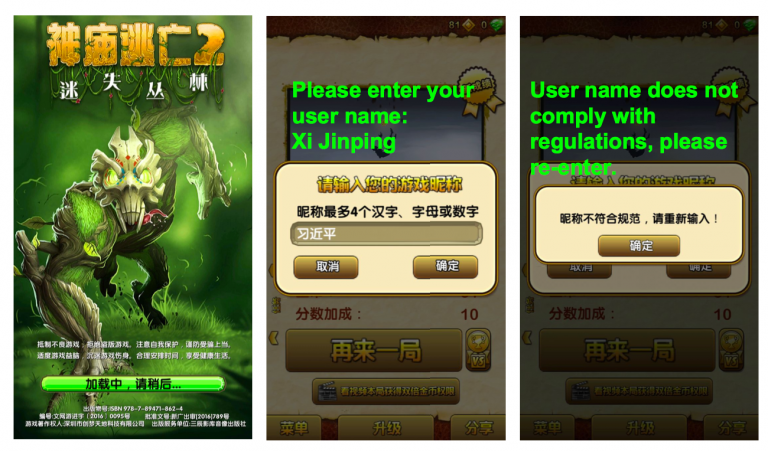

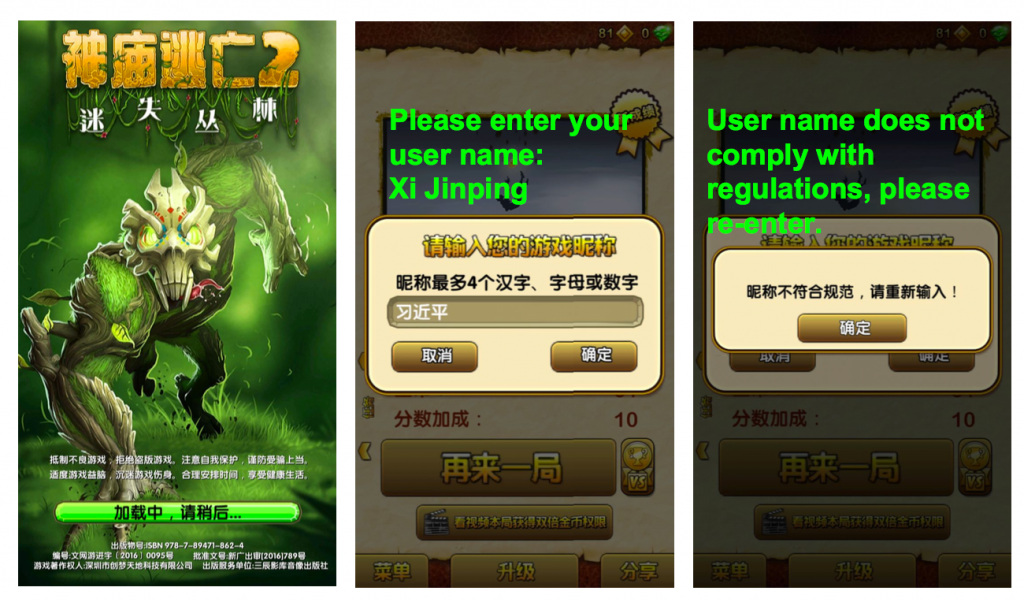

In this new paper, the researchers used blacklisted keywords found in games to better understand how these lists are created. These keywords are used to block content in player-to-player chats and user names. The games tested in the study implement censorship on the client-side (meaning the rules and content for censorship are embedded in the application itself and could be extracted for analysis).

Once extracted, the researchers compared them to look for similarities and tested four scenarios that could explain how blacklisted keyword lists are generated:

-

Content directives are determined at the city or provincial level and may vary depending on where companies are based;

-

Content directives are determined for specific genres of games;

-

Content directives are related to the date that games are approved by regulators;

-

Companies are under general regulatory pressures but have a degree of flexibility in determining which specific content to block.

The findings show that the only consistent predictors of keyword list similarity were their publishers and developers, meaning that those making the games exercised some control over which words would be censored.

“This demonstrates how decentralized censorship is,” says researcher Jeffrey Knockel. “This suggests that the responsibility of deciding what to censor is really just pushed down [from the government].”

The findings further illustrate how the system of self-discipline regulates social media in China and the balancing act companies are in as they attempt to grow their business while staying within the redlines of the government.

The anaconda in the chandelier, a term used by researcher Perry Link, aptly describes this system of censorship: a looming force high above, ready to strike if not properly abated. Here, the threat of attack is enough to alter one’s behaviour.

“There’s no doubt that China’s online gaming market is very lucrative. But as our research demonstrates, there are unique challenges for companies operating in such a restricted environment,” says researcher Lotus Ruan. “It’s like the anaconda: hanging over them even when they are only making entertainment products that have very little political intent.”