How WeChat Filters Images for One Billion Users

With over 1 billion monthly users, WeChat boasts the title of most popular chat application in China and the fourth largest in the world. However, a new report by Citizen Lab researchers reveals exactly how the platform is able to censor images sent by these billion users.

Building on previous research which shows that WeChat censors sensitive images, this new report demonstrates the technical underpinnings of how this censorship operates. Specifically, findings show that WeChat uses two different algorithms to filter images: an Optical Character Recognition (OCR)-based approach that filters images containing sensitive text and a visual-based one that filters images that are visually similar to those on an image blacklist.

“Most censorship research has thus far focused on measuring website blocking or censorship of chat, posts, and other text media,” says report author Jeffrey Knockel. “As images become an increasingly large component of how we communicate online, we need to also have a good understanding of how image censorship is implemented.”

And evidence suggests that images are gaining favour among WeChat users. In a recent study, images ranked as the most preferred type of message shared on WeChat Moments (similar to Facebook’s Timeline feature), beating out text-based posts and short videos.

“Understanding how the industry leader conducts censorship of different content formats offers us some insights into the trend of censorship and direction of future research,” says report author Lotus Ruan.

To evaluate how image censorship functions, Citizen Lab researchers devised a series of tests that revealed the ways in which filters would reject or allow images in Moments. They discovered that the OCR-based algorithm has details common to many standard OCR algorithms in that it converts images to grayscale and uses blob merging to consolidate characters. Additionally, they found that the visual-based algorithm is not based on any machine learning approach that uses high level classification of an image to determine whether it is sensitive or not.

By analyzing and understanding how both the OCR- and visual-based filtering algorithms operate, researchers discovered weaknesses in both algorithms that would allow a user to upload images perceptually similar to those prohibited but that evade filtering.

For instance, choosing the background color of an image’s text such that both the text and its background become the same shade of gray after WeChat converts it to grayscale allows the text to appear visible to a user but would subvert censors.

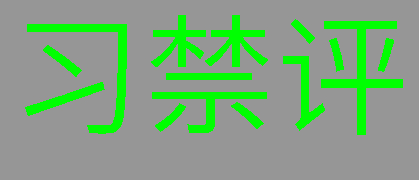

Table 1. An image with green text and a background colour of gray with the same shade as the text according to the luminosity formula for grayscale and how the text would appear to an OCR algorithm according to three different grayscale algorithms. If the OCR algorithm uses the same grayscale algorithm that we used to determine the intensity of the gray background, then the text effectively disappears to the algorithm.

The researchers also found mirroring, rotating or changing the aspect ratios of a censored image would evade the visual-based filters.

According to Knockel, this in-depth analysis sheds light on how companies like Tencent, WeChat’s owner, operate within an environment like China

“The algorithms to perform this kind of image filtering are computationally expensive in terms of time and energy. Performing this type of filtering is the last thing Tencent wants to be wasting money on, but the fact that it does suggests that there is a large amount of pressure Tencent receives from the Chinese government to implement it.”

The report also suggests that, while pervasive, censorship in the country is not impervious to evasion.

“Developing censorship technologies is a game of cat-and-mouse.,” says Ruan. “While censorship in China is pervasive, the technologies that enable it are not always absolute. Under pressure from the government, companies will continue to develop new means to block content. Analyzing how these technologies work helps us understand the extent of information control in China.”

This research is being presented at the 2018 USENIX Free and Open Communication on the Internet (FOCI) Workshop. The FOCI paper is supplemented with a technical report that provides extended analysis